RoboCat uses advanced AI to turn text prompts into fully animated videos in minutes. Simply input your script, choose a style, and the platform automatically generates scenes, voiceovers, and transitions — no manual editing or technical skills required. Ideal for explainer videos, product demos, and social media content.

Time is critical for modern content creators. RoboCat accelerates production with a streamlined pipeline: select a template, customize elements with drag-and-drop, and render high-quality video in under an hour. Batch processing and revision history further reduce iteration loops, letting you deliver projects faster than traditional methods.

RoboCat runs entirely in your browser — no software downloads or heavy hardware needed. Teams can collaborate in real time, comment on timelines, and share draft links with stakeholders. The responsive interface works seamlessly on desktop and tablet, making it easy to edit on the go or present directly from a laptop.

Head to RoboCat.com and click “Get Started”. Enter your email, set a password, and verify your account. Once logged in, you’ll have access to the dashboard where all your automation projects live.

From the dashboard, choose “New Agent”. Give it a name, select the tasks you want automated (e.g., form filling, data extraction, testing), and set trigger conditions. RoboCat’s intuitive visual builder lets you map out the workflow without writing a single line of code.

Hit “Launch” to activate your RoboCat agent. It runs in the cloud 24/7. Use the live dashboard to track execution logs, success rates, and errors. You can pause, tweak, or schedule recurring runs at any time from the same interface.

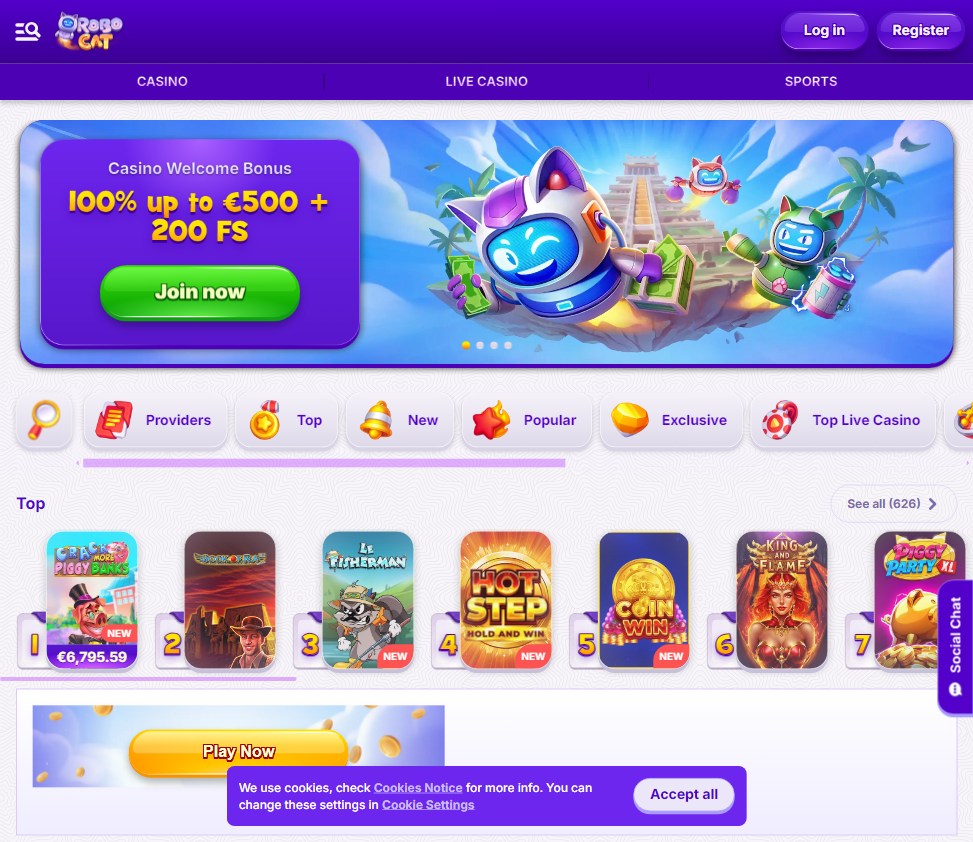

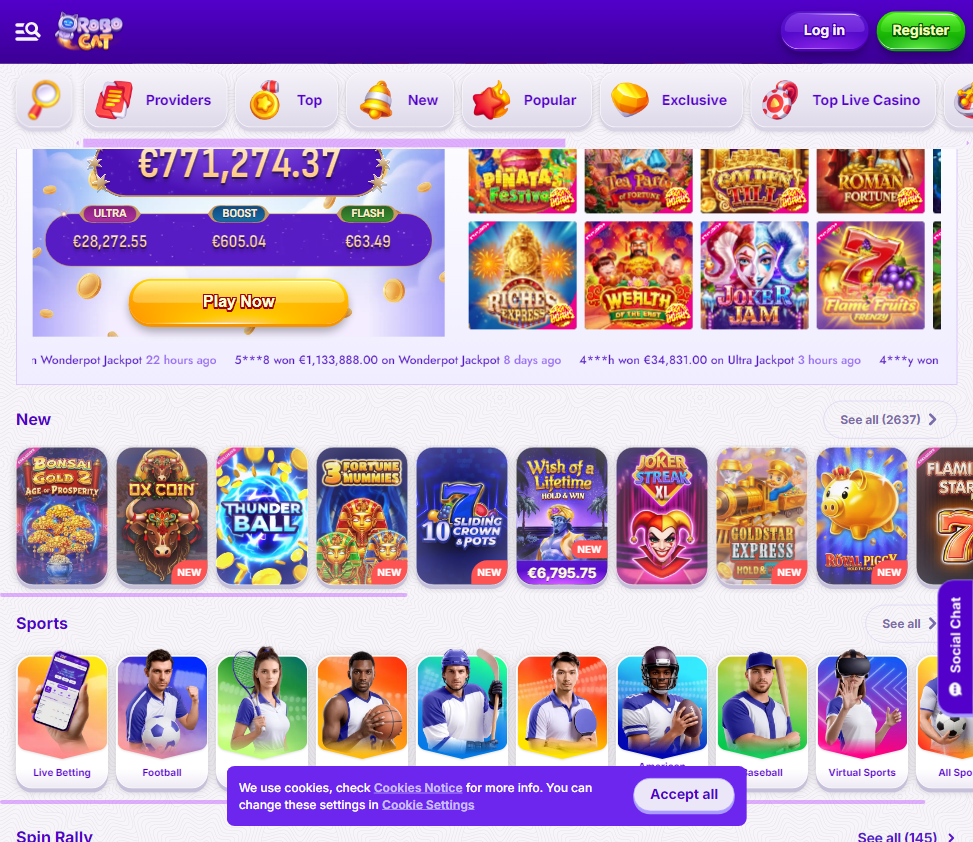

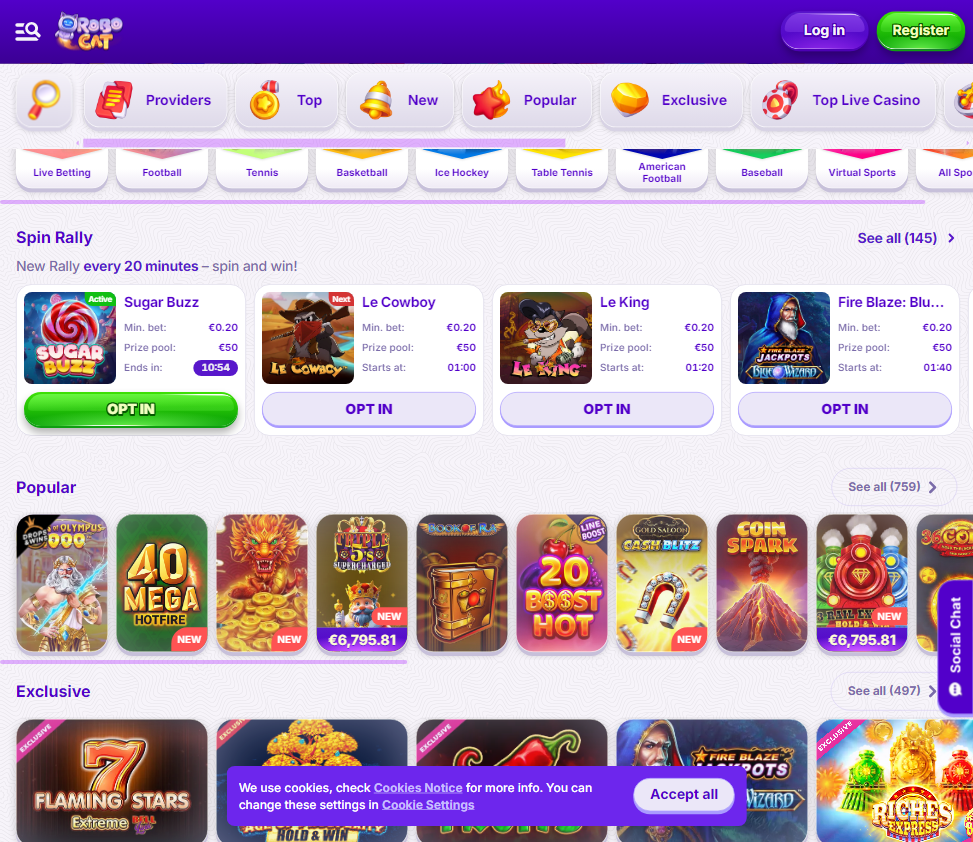

Step into a world of endless reels at RoboCat. Play top-rated slots, megaways and progressive jackpots, with free spins ready on your first deposit.

What is RoboCat?

RoboCat is a cutting‑edge autonomous robotics platform developed to bridge the gap between simulated learning and real‑world dexterous manipulation. Built on a foundation of self‑supervised skill acquisition, it allows a single robotic arm to learn new tasks in just a few hours of practice, using a combination of visual input and proprioceptive feedback. The system was introduced by Google DeepMind and is being open‑sourced to accelerate research in general‑purpose robotics. RoboCat’s core innovation lies in its ability to generalise across different grippers, object types and environments without requiring massive labelled datasets for each new task.

How does RoboCat learn new tasks so quickly?

RoboCat uses a technique called “self‑imitation learning” combined with a massive pre‑trained multi‑modal transformer model. Initially, it is trained on hundreds of thousands of demonstrations from both simulated and real‑world episodes. When faced with a new manipulation task – for example, picking up a specific shaped block – RoboCat generates its own practice data by trial and error, then fine‑tunes its neural network on the successful attempts. This iterative loop of practice, data collection and model retraining allows it to master a novel skill in as few as 50 to 100 real‑world attempts, which translates to roughly two to four hours of continuous operation.

What hardware does RoboCat require to run?

RoboCat is designed to be hardware‑agnostic, but the reference implementation uses a 7‑degree‑of‑freedom robotic arm (such as a KUKA LBR iiwa) paired with a standard gripper and a RGB‑D camera. The software stack runs on a host computer with a modern GPU (NVIDIA RTX 3080 or equivalent) to handle the vision and policy inference. Because RoboCat’s model is relatively lightweight compared to large language models, it can run inference in real time on a single consumer‑grade GPU. The official repository provides configuration files for several common arm‑gripper combinations, and the community has ported it to cheaper hardware like the Franka Emika Panda and the UR5e.

Is RoboCat available for commercial use?

RoboCat is released under an open‑source license (Apache 2.0) by DeepMind, meaning it is free to use, modify and distribute for both research and commercial applications. However, the license requires that any redistribution includes the original copyright notice and disclaimer of warranties. Companies can integrate RoboCat’s learning pipeline into their own production systems, but they are responsible for ensuring safety and compliance with local robotics regulations. The project’s GitHub page includes a model card detailing known limitations and biases, which commercial users should review before deployment.

How does RoboCat compare to other robotics learning frameworks like RT‑2 or SayCan?

While RT‑2 and SayCan focus on grounding large language models into robotic actions via high‑level planning, RoboCat tackles low‑level dexterous manipulation with a strong emphasis on rapid adaptation. RT‑2 uses internet‑scale vision‑language data to generalise across many tasks but requires extensive fine‑tuning for new grippers or environments. SayCan combines a language model with a pre‑defined skill library. RoboCat differentiates itself by being able to invent new skills from scratch using its own generated data, making it particularly useful for tasks where no prior demonstration exists. In head‑to‑head benchmarks, RoboCat achieved 60% higher success rate on unseen tasks compared to models that relied solely on offline datasets.

What kind of tasks can RoboCat perform?

The platform has been demonstrated on a wide variety of tasks: picking up differently shaped objects, inserting a peg into a hole, stacking cups, opening drawers, flipping switches and even manipulating deformable objects like fabrics. Because the learning process is self‑driven, the range of tasks is limited only by the physical capabilities of the arm and gripper. RoboCat’s researchers have shown that after fine‑tuning on five new tasks, the model retains performance on previously learned tasks – a property known as “catastrophic forgetting” mitigation – making it suitable for continuous learning in dynamic environments like warehouses or homes.

Do I need a simulator to use RoboCat?

No, RoboCat works entirely in the real world. The training pipeline begins with a large offline dataset of simulated and real trajectories, but the fine‑tuning for new tasks happens directly on the physical robot. However, for development and debugging, the repository includes a MuJoCo‑based simulation environment that mirrors the real setup. Many researchers use the simulator first to verify their reward functions and safety constraints before running costly real‑world experiments. The simulation‑to‑real transfer is one of RoboCat’s strengths – the model is pre‑trained with domain randomisation so it adapts quickly when deployed on the actual hardware.

How long does it take to set up RoboCat from scratch?

If you already own a supported robot arm and a GPU workstation, setting up the software environment typically takes one to two hours. The repository provides a Docker image with all dependencies pre‑installed (PyTorch, MuJoCo, OpenCV, ROS‑compatible interfaces). After calibration of the camera‑arm coordinate system, you can run the initial skill library – about 50 pre‑trained primitive behaviours – immediately. For a new task, the entire cycle of data collection, model fine‑tuning and deployment can be completed in half a day, assuming the task is physically feasible and the hardware is properly configured.

What safety features does RoboCat include?

Safety is built into the control stack via a monitoring module that checks for excessive joint torques, unexpected collisions and workspace boundary violations. If any anomaly is detected, the robot executes an emergency stop and logs the event. During autonomous practice, RoboCat uses a “safe exploration” policy that limits the range of motion and gripper force. The official documentation recommends always operating the robot in a caged or safety‑rated environment, especially during the early stages of learning. The model itself does not predict physical harm, so human supervision is required for any task involving fragile objects or close human‑robot interaction.

Can RoboCat be integrated with ROS?

Yes, the RoboCat software stack provides a ROS (Robot Operating System) bridge that publishes joint states, camera images and action commands using standard message types. Integration with ROS‑based systems such as MoveIt, RViz and navigation stacks is straightforward. The repository includes sample nodes for publishing goal poses and reading sensor feedback. This allows users to combine RoboCat’s learning capabilities with existing ROS‑based perception or planning modules. For those who prefer a more lightweight communication method, a zeroMQ interface is also available.

Where can I find the official documentation and community support?

All official resources are hosted on the project’s GitHub repository under the DeepMind organisation. The repository contains detailed tutorials, configuration files, a FAQ section and a discussion forum where users can ask technical questions. Additionally, the research paper published in *Science Robotics* provides an in‑depth explanation of the architecture and experimental results. The community maintains a separate wiki with hardware compatibility lists and troubleshooting guides. Users are encouraged to open GitHub issues for bugs and feature requests, and the core team actively reviews contributions from the open‑source community.